The Science of Wind Turbine Syndrome: Part 1

Jul 24, 2013

.

The real gold standard of science is not “peer review”; it’s something called “reproducibility.”

.

—Curt Devlin (Fairhaven, MA), 7/1/13

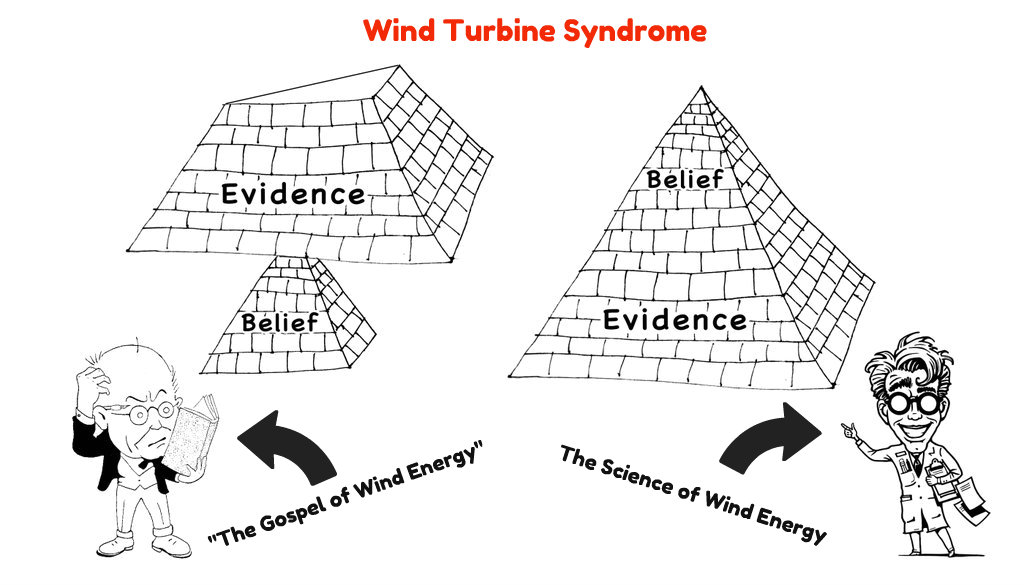

Science has become a rareified business these days. It is conducted far outside the bounds of the average person’s experience. As a result, it’s easy to mislead people about how science actually works and what counts as good science or bad science. Most people know that evolution is considered to be good science and creationism is considered to be bad science, but they still would be largely at a loss to explain why.

When told that the “gold standard” of science is peer review, most people tend to accept this as gospel. If science has become so sophisticated that only experts in the field can understand it, then surely it makes sense to have any scientific conclusions evaluated by other experts in that field. Right?

Unfortunately, the idea that peer review is the gold standard of science is absolutely false.

To the extent that peer review is based on authority or expert opinion, it is completely contrary to the true spirit of science. Peer review is not a bad practice, but its true purpose is to improve the work and decide if it is worthy of being published. You could say that peer review is the gold standard of publication—nothing more and nothing less.

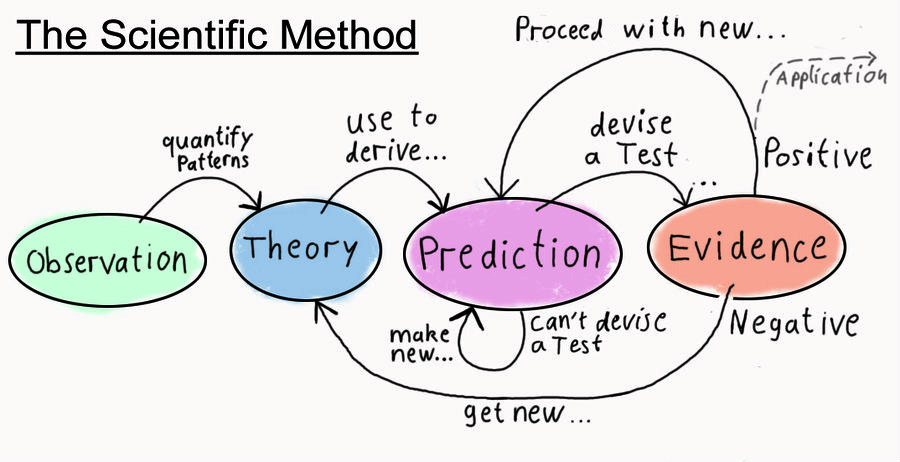

At its heart, the real core of science is a handful of simple ideas called the scientific method. It involves careful observation, precise measurement, and accurate reporting of both the conditions under which measurements were made, and the measured results themselves. The whole point of the scientific method is to eliminate human authority, opinion, or bias of any kind from consideration.

The real gold standard of science is something called reproducibility. Simply put, this means that if you do the same experiment under the same conditions and same measurement precision, you get the same results.

You could say the mantra of science is “see for yourself.”

If we apply the standard of reproducibility to the findings reported by Dr. Nina Pierpont in her book, “Wind Turbine Syndrome” (WTS), her conclusions hold up remarkably well because her work is based on very careful observation and measurement. Her reporting of experimental conditions and measurements is extremely detailed and meticulous—even to the point of publishing all the raw data in her book. (No one who is interested in selling a book puts raw data in it.) Presumably, Pierpont did this to ensure that serious defects would be obvious. This type of transparency and disclosure is a signature of scientific integrity.

Predictability is also a very important element of good science. The findings of a study should support specific predictions about outcomes under certain conditions. As a resident of Fairhaven, Massachusetts, where two 1.5 MW industrial wind turbines were sited in a dense neighborhood two years ago, WTS has proven to be an excellent predictor of the adverse health effects that have occurred since then. I have absolutely no doubt that the results of Pierpont’s study could be reproduced in Fairhaven tomorrow.

Pierpont’s critics within the wind industry could easily fund an independent study to determine whether her experiments can be reproduced, but they never have and never will. Perhaps they already know too well that these attempts will only result in confirming her findings. Pierpont’s findings are simple enough. When people live near wind turbines, they experience nausea, dizziness, sleeplessness and stress-induced illnesses. When they get away from them, they begin to feel much better and may recover completely.

Just the other day, I heard a science editor on public radio, Heather Goldstone, leveling criticism that WTS is not peer reviewed and parroting the claim that peer review is the gold standard of science. Anyone who has read WTS (as I have) knows that Goldstone is factually incorrect. Pierpont sought review, advice, and criticism from her peers throughout her research and publication. This group included highly regarded clinicians, acoustic experts, researchers in neurology and public health, among others. The referee reports (aka peer reviews) are all included in the book, for those who trouble themselves to actually read it.

Heather Goldstone

Goldstone also claimed that Pierpont’s study was flawed because she studied subjects only in one small location. This is also factually incorrect, proving only that our “science” editor, Goldstone, had never bothered to actually read WTS herself. (Maybe she got this information about the book from “good authority,” perhaps a friend in the wind industry?)

As Pierpont explains in the book, she went to great lengths to identify subjects from other geographies and countries to avoid this limitation in her case study. That is why she had to restrict the participants to those who spoke English, to ensure that she could clearly understand their reports.

By contrast, the process of peer review does not stand up so well under close scrutiny. Several studies have shown that the peer review process can be fraught with petty professional jealousy, personal grudges, and other conflicts of interest created by ambition, academic competition, and so forth. Some studies have shown that this problem is even worse in blind and double-blind peer reviews, because reviewers can hide behind anonymity and offer reviews that they would not stake their professional reputation on.

So much for peer review as the ultimate standard of scholarly publication.

Advocates of wind energy would have you believe that anything that is not peer-reviewed should be discredited and disregarded. Let’s see how this idea holds up.

In 1904, if you had argued that apples fall from the tree to the ground because the immense mass of the Earth causes space to warp, you would have been treated to some strange looks. If you had claimed that time slows down as things speed up, or that matter and energy were really two forms of the same thing, you probably would have been diagnosed with dementia praecox (that’s what Alzheimer’s was called in those days). Truly, such ideas simply defy common sense. (Note: Good science often does.)

You would have been subjected to raucous laughter if you had mentioned that you learned all this from a third-rate clerk at a Swiss patent office in Bern.

And yet, as improbable as all this sounds, this is more or less what Albert Einstein did tell us in an article he published in a German periodical called Annals of Physics in 1905. It established one of the very pillars of modern physics for the next century.

Amazingly, Einstein’s work was not peer-reviewed at all. It was read by Max Planck, the pre-eminent physicist of the day, who gave it a wink and a nod. Then it was published. Since then, Einstein’s theories have been experimented with, scrutinized, and tested as much as any in history. Science must accept or reject it based on evidence alone, not a “peer reviewer’s” authority or opinion.

Einstein’s ideas—most, at least—have been confirmed over and over again.

Based on the “gold standard” of peer review, however, we are presumably expected to discard the theory of relativity until it has been properly peer reviewed.

James Watson

In 1953, two Harvard biologists, James Watson and Francis Crick, published a paper in the journal Nature claiming that the chemical structure of DNA, the code for all life on Earth (and probably the universe), is a double helix—like a spiral staircase, which in fact gave them the idea.

Again, they did so without a single peer review. It would seem that we must disregard the foundations of modern biology and genetics, too. The “gold standard” of peer review demands it, correct?

When given fairly and honestly, peer review can be a powerful ally of science. Often, peer review can provide an invaluable exchange of ideas between researchers. Sometimes it can be the beginning of fruitful collaboration between scientists, each of whom is holding a different piece of the same puzzle. But the idea that peer review is the final arbiter of science is absurd. If there is such a standard, it is, and must be, reproducibility. Replicability.

Darwin’s “Origin of Species” was not “peer-reviewed” before publication. But it was “replicated”—and it revolutionized biology.

Let’s face it. The chant of peer review coming from religious devotees of wind is becoming nothing more than lip service by those who have been turned into intellectual zombies by the incessant propaganda of a wind industry that places profits above health, politics before science, and opinion over genuine knowledge.

In the case of WTS, this chant has been used as a weapon of mass delusion, a device to dismiss a superb piece of science and a pioneering contribution to our knowledge about the impact of wind turbines on human health and wellbeing. This has been done because legitimate criticism and ground-level research only serve to strengthen the conclusions arrived at in this book.

If you are interested in some of the most cogent and legitimate criticisms of WTS that I have read, consider these:

» The study was done by interview and limited to available medical records.

» Participant memory limitations or distortions.

» Possible minimization or exaggeration effects.

» The study was limited to English-speaking subjects.

» Small case series sample.

» Limited duration of follow-up.

The details of these specific criticisms and limitations of the Pierpont report can be found on pages 124-125 of WTS. Pierpont herself wrote them to alert her peers and fellow clinicians, and to identify the limitations of her own work; undoubtedly realizing that the study should be done on a much larger scale to address them. This was a task she did not have sufficient resources to do herself.

Calling attention to the defects or limitations of your own study does not invalidate it. On the contrary, it is one of the hallmarks of good science and an invitation to further study by other scientists who may be in a position to eliminate those limitations and either confirm or reject its conclusions on the basis of the evidence alone.

At the end of the day, you must ask yourself why a study of such profound social importance has not been repeated on a large scale. Could it be that those with the most to lose are afraid of what they will find?

“Wind Turbine Syndrome” is good science. The devotees of wind turbines, who would challenge the results reported in its pages, must do so on the basis of more good science—or not at all. Either they must exercise the Principle of Reproducibility or accept Ludwig Wittgenstein’s famous caution, “Whereof one cannot speak, thereof one must be silent.”

.

Curt Devlin

.

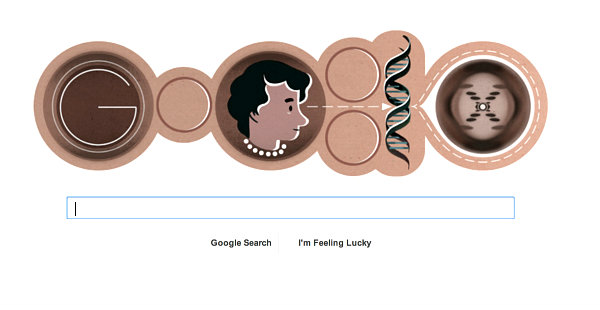

Editor’s note: Notice “Google’s” home page image, today. Celebrating Rosalind Franklin on her 93rd birthday.

Comment by Tom Whitesell on 07/24/2013 at 8:58 pm

This is an excellent, articulate piece! Thank you, Curt, for writing this! And thank you, WTS, for posting it.

Comment by Eric Rosenbloom on 07/24/2013 at 9:55 pm

Excellent essay! As one whose job is editing medical papers, I have always been amused at the invocation of “peer review,” especially as I later edit letters where much more critical peer review happens.

As you note, “peer review” in the usual sense is merely a process to get published, to meet the standards of research and reporting that a certain journal expects. It is not a judgement on the “correctness” of a paper’s conclusions, only on whether they are reasonably argued.

The “peer review” goon squad seems to think that science is “settled” and that only work that conforms with the “consensus” is properly published. Then, of course, we would not need any science journals, because one purpose of science is to continually challenge accepted wisdom. Even validating previous research is to “test” it. As you say, the test of scientific research is its reproducibility, and the test of scientific theory is its success in predicting outcomes. “Wind turbine syndrome” has held up well before both juries.

Comment by gail mair on 07/25/2013 at 8:23 am

Yes, I agree! Thank you, Curt and WTS.

Comment by Eric Rosenbloom on 07/25/2013 at 8:24 am

Another test of a study is whether it provides useful information to direct or inform further research. Again, “wind turbine syndrome” has done so. As you note, it is not the final word and was not meant to be. Like every scientific paper, it includes a discussion of its own limitations. Those limitations also direct or inform further research.

Comment by sue hobart on 07/25/2013 at 8:29 am

Thanks Curt… you’re the best!

I am still calling for medical and true scientific studies to begin in my home…. all peer reviewers are invited to participte…

Or is it better to sit in an ivory tower, reading and deciding weither or not WTS exists.

The world is corrupt and in this case too lazy to get off their butts and figure this out once and for all…

How does one go about getting a privately-funded no biased research grant?

Anybody know a billionnaire with a good heart? He can pick my house up for cheap and have at it.

Comment by Carl V Phillips, PhD on 07/25/2013 at 10:39 am

Curt, nice commentary. Having written a fair bit about the nature of peer review before (and having run a journal and had various other hands in the process), I entirely agree with your assessment about peer review. It does not provide the magic that is generally attributed to it, either by people who really do not know how it works or by those who cynically take advantage of the former. Peer review has become a fetish, with those who worship it not understanding that it is not the same as the real critical scrutiny that it serves as an idol of, and indeed that it does not do it very well anymore.

I might not go so far as to say that replication is a “gold standard” — it is, after all, quite possible to reliably reproduce the same effect of confounding. There need to be elements of proposed alternative hypotheses and testing of them in there too (which in the case of the health effects of IWTs also tends to support the case). But the “you can see the evidence and try it yourself” is indeed the key step that Thomas Boyle used as the anchor of introducing the modern scientific method as a counterweight to the medieval notion of just appealing to authority (not much different from the peer review fetish).

A few additional details: The current institutionalized journal peer review system is a fairly modern invention. It did not even exist when Einstein first flourished. Indeed, as it came into being later in his career, he famously balked at participating in it. Arguably, it actually worked pretty well then, when there were far fewer papers and the peers really were that. This is no longer the case. The system was extant by the time of Watson and Crick, but their breakthrough was actually published in a letter rather than a reviewed article.

Comment by Sarah Laurie, MD, CEO Waubra Foundation on 07/25/2013 at 11:03 am

Curt, thank you so much for helping give others some well considered thoughts, to help expose the rubbish about peer review being touted by those who deny the serious health problems being reported.

Nina’s work is an extraordinary piece of honest hard work, in a contentious, difficult and complex area of clinical medicine, which has more than stood the test of time and serious scrutiny.

As with Nina’s work, your words have a clarity and honesty that cannot be dismissed or ignored.

Grateful thanks for your willingness to stand up and speak out about the fundamental abuse of vulnerable people, which is currently occurring around the world, and has been for years, with the full knowledge of the wind industry and the US government Department of Energy, thanks to Neil Kelley’s research.

Comment by Mike McCann on 07/25/2013 at 11:16 am

As a seasoned appraiser with a developing knowledge of property value trends near wind projects, I was invited to “peer review” the 2009 draft LBNL report by its principal author before publication. When I was informed that the underlying sale data was “confidential” and would not be released, I told the author that with such lack of transparency I could not complete a true peer review.

Never mind that absolutely no accepted appraisal standards were used or that the author was not a “peer” to or within the appraisal profession—I was willing to comment on the report, anyhow.

None of the nearby home sale data that revealed a 25% value loss within 2 miles, of which I informed the author, was cited, nor were my cautionary remarks, conclusions or lack of concurrence for his multi-contingent (meaningless) conclusions. In fact, the significant errors in the draft report regarding my prior independent study basis were not even corrected.

The point is that the mantra of “peer review” has been used to claim credibility of the author’s conclusions, despite the (peer) reviewer’s comments refuting the validity of his alleged findings.

Further, unlike the transparency of Dr. Pierpont, no reviewer comments were included in the final LBNL report. Yet they still publicly claim that it was “peer reviewed.”

I would not suggest that property value impacts are as debilitating as health impacts, but I am suggesting that Curt Devlin’s remarks about peer review are just as applicable to the property value impairment issue.

Comment by Mauri Johansson, MD, MHH (Denmark) on 07/25/2013 at 12:37 pm

Dear Curt,

What a good job! I have only a few supplementary comments to your fine presentation of what science is all about. If we are able to do experiments which, for ethical reasons is not always the case where humans are at risk for harmful effects, or let’s say in astronomy—then other approaches must be used.

It was the French philosopher Rene Descartes who so strongly argued for THE METHOD. Nearly to a point that if you got other results using the same method your experiment went wrong. But is that possible? The brilliant US physicist Richard P. Feynman, who can be strongly recommended, wrote that if you think it went “wrong” or you got other results than expected, perhaps the explanation was that some minor, perhaps quantum-level details, were different compared with what your predecessor did. It is nearly impossible to give such detailed information that things can be repeated 100%. Supplementary experiments must be carried out and minute analysis of ALL involved elements be carried out, again and again. But science is there to serve the whole of humankind—not to benefit the very few.

There is an other rapidly growing problem. When research goes from neutral, independent, honest and open scientific environments and is moved to closed, well-hidden rooms in industrial contexts just to produce profits as fast as possible–then indeed many things go wrong in their “science.” As you write, Curt, if the pure science environment is suffocated and an open dialogue prohibited, then it has to go wrong—to get derailed—at some point in the process. Industrial competition is far from always a plus. Think of Big Tobacco, Big Oil, Big Pharma and now Big Wind. They have won decades by producing fake or junk science even as they demand more “research,” launching lawsuits and heaven knows what. So Big Wind has learned from these other industrial antecedents.

Today, we know that we NEVER can reach the “FINAL” truth, but we can approach it asymptotically and find the results useful, trustful and safe for humans, and wind turbine neighbors, too. The IWT industry should choose this ethical point of departure in their “act-on-fact” campaign, and the authorities should listen to the complaints of people and take proper action, instead of lending their ears to the seductive song from Big Wind.

Best,

Mauri Johansson, MD, MHH

Specialist in community and occupational medicine

Denmark

Comment by Eric Rosenbloom on 07/25/2013 at 8:12 pm

A case in point is the recent news that AGL Energy did not find infrasound (none at all!) in 2 houses some distance from their MacArthur, 420-MW wind turbine array in South West Victoria. The results were “reviewed” by Geoff Leventhall.

’Nuff said.

Comment by mark duchamp on 07/25/2013 at 8:54 pm

What a breath of fresh air! Many thanks to the author, Curt Devlin, and to Calvin for publishing it.

Many times, over the years, I fought corrupt ornithologists on international ornithology forums. “Peer reviewed” studies were thrown at me to dismiss my theory, which is that wind turbines are threatening the survival of many bird and bat species.

I shall be vindicated in the end, but it is evident that the peer review system is presently being used by vested interests to keep certain things hidden from the public. Scientific journals refuse to publish articles that would challenge “the settled science” in certain domains, and this will have dire consequences on people as well as biodiversity.

This, for instance, is not peer-reviewed, yet it is compelling.

Even if scientific journals were not corrupted by dogma, money, or politics, many valuable theories would never get published because they are not presented to them in the right format—just another case of bureaucracy stifling productivity.

On the other hand, scientists who swim with the current of “settled science” know very well how to “work” scientific journals, and they inundate the market with peer-reviewed studies that are often worthless, but financed by vested interests.

Comment by Jutta Reichardt, EPAW-Spokesperson for Germany on 07/25/2013 at 9:28 pm

Wonderful conversation after the fabulous thoughts by Curt. Thank you all!

I was curious and I looked up times, to learn the importance of “peer review” in everyday German. And I found this:

dynamic peer-reviewed open peer review {noun}

interactive peer review, open peer review {noun} open peer review

Aha! Peer review can be open, interactive and dynamic!

Now everything becomes clear: We, the long-term guinea pigs of the German wind power profiteers, are the true “peer reviewers”! Jutta and Marco from Northern Germany, exhibiting all the WTS symptoms, which we have acquired over the course of 18 years and 3 months, living near 6 wind turbines!

It was a dynamic process of a destructive increase of disease symptoms, exactly as described by Nina Pierpont in “Wind Turbine Syndrome.” Always involving the interaction between wind turbines and our organs, in addition to the Vibro-Acoustic Disease described by Mariana Alves-Pereira, together with Sarah Laurie’s discoveries about blood pressure and heart rhythm problems .

Now it’s open-ended: death or survival. Who is stronger, the wind turbines or us?

All the other tragic victims of the wind industry, who now suffer as we did, are the next “reproducibility” that Curt has written about.

Thanks to you all, helping us to survive this plague through understanding and with enough power, to fight the perpetrators! We’ve written about this “hell on earth”—our personal peer review—in our Windwahn Story in http://www.windwahn.de.

Warm wishes to all,

Jutta (Germany)

Comment by Marshall Rosenthal on 07/26/2013 at 6:28 pm

Elegant thinking, Curt! Just look at the responses that you have stimulated. Ethical thinkers, people who have both experienced the chicanery of the establishment and the misery of having been made the victims of technocracy, have come forward with their testimony.

This is the “golden standard” we seek. It is called the truth.

Comment by Kaz on 07/26/2013 at 11:10 pm

Thank you, Curt (et al…)

NOW! What do we DO with this? With the knowledge, the passion, the conviction that WTS is real? With the knowledge that people are truly suffering? That an industry is being given a free pass from our own governments while it tortures people? Destroys their health, their quality of life, their property values? The industry has trashed Nina and other intelligent, brave (and classy) citizens for years and they have bought themselves time in doing so. They’ve bought time to build more wind facilities and time to pocket millions more in tax-payer dollars.

We write and we write… and thank God we do! But we’re preaching to the choir. How do we take all that we’ve learned, all that we KNOW, and excellent essays like the one Curt’s written here… and make them COUNT?

REALLY count?

Thank you Curt… and Calvin and Nina and Sarah and Mike and Marshal and Carl and Marc and all the rest. I have the deepest respect for you. Please help me to figure out what to DO with all that we’ve learned and all that we know. Who needs to hear this? Who has the power to change the status quo? Who will listen and act? Someone must… and soon.

Respectfully,

Kaz Pease

Lexington Twp., Maine

Comment by Joseph A Olson on 08/05/2013 at 7:40 am

Connecting the dots, we have ‘post-normal’ science used by monarch-monopolists, who’s Darwin-Malthus-Nihilist philosophy is then imposed for the goal of neo-feudalism. A multi-generational crime syndicate has control of government, media, education and peer review science. Traditional science and evidence are inconvenient. The environmental movement involves three Carbon lies, Carbon climate forcing, ‘sustainable’ energy and ‘peak’ oil. There is NO possible way that 28 giga-tons of human produced, naturally occurring three atom gas can control the temperature of 258 trillion cubic miles of mostly molten rock and 310 million cubic miles of ocean at 4C. There is NO ‘sustainable’ energy that produces more energy than its required INPUT [or investment] energy. Hydrocarbons exist throughout the Universe and are the required precursor to life, not a limited residue of past life. A summary of these defects is in “Becoming A TOTAL Earth Science Skeptic”.

The reason for these defects, and the massive defects in other science and in reporting of ‘history’ is to conceal the underlying defect of our imperial legacy, our defective monetary system. For that see “Fractional Reserve Banking Begat Faux Reality”. The internet has provided our first HONEST, world wide review of evidence about our True condition and the system of robber baron control. We have limited time to correct these defects before this HONEST option is removed and an Orwellian structure is imposed. Find and share Truth….it is your duty as an Earthling.

Comment by Martin Cornell on 08/05/2013 at 11:25 am

Once upon a time, there existed the concept of “hypothesis” as part of the scientific method. In the flow of things, “hypothesis” held the spot indicated by “theory” in this essay. That is, “hypothesis” was the conjecture part of the scientific method. A “theory” was one possible output of the scientific method, i.e. that part that was supported by the evidence and that proved predictable. Indeed the separate meanings for “hypothesis” and “theory” are the definitions of the National Academy of Sciences, to wit:

Too many unproven concepts are prematurely inflated to theory status. The concept of dominant anthropogenic warming of the globe is one current example. Pierpoint’s WTS hypothesis has passed the first round of falsification testing, and is on the path toward theory.

Cheers!

Marty Cornell